Your Team Should Not Be Writing Test Scripts. Here's Why - and What to Do Instead.

Add up every hour your engineers spent last sprint writing test selectors, debugging flaky CI runs, and patching broken scripts after a routine UI refactor. What does that number look like ?

Most engineering leaders who do that exercise arrive at an uncomfortable conclusion...

Test automation has quietly become one of the most expensive forms of technical debt their teams carry. Unlike debt that at least created product value on the way in, this debt was incurred solely to validate the first product - and it requires as much maintenance to stay functional.

So, the conventional solution - ADD TOOLING.

- Use a better framework.

- Adopt Playwright instead of Selenium.

- Add GitHub Copilot to accelerate script writing.

- Wire up an MCP server so an AI can generate tests from a live browser.

Each move addresses a symptom. None addresses the cause.

The cause is that test scripts are the wrong abstraction for modern software. They couple quality validation to the current mechanical state of your DOM - the one thing guaranteed to change every sprint. Every UI refactor, design system update, and component rename regenerates the maintenance burden from scratch.

How We Ended Up Owning a Second Product Nobody Wanted

Modern test automation frameworks were transformative when they arrived. Selenium launched in 2004, when web applications changed slowly and XPath selectors survived months unchanged. The economics made sense: write a script once, run it hundreds of times, catch regressions.

In 2025, those economics have completely inverted. The average production application deploys multiple times per day. Design systems are refactored frequently. Component libraries get renamed in bulk. The DOM your test was written against last Tuesday may be structurally different this Tuesday.

What engineering teams ended up building is a second product - a test automation framework with its own architecture, its own backlog, its own on-call engineers, and its own technical debt. This product produces zero customer value. It exists solely to validate the first product, and it requires as much maintenance as the first product to stay functional.

While at my previous companies, we used to have an entire sprint board dedicated to track test automation. We had a huge team and most of the times besides writing scripts for testing the product, we were constantly maintaining and building our own product.

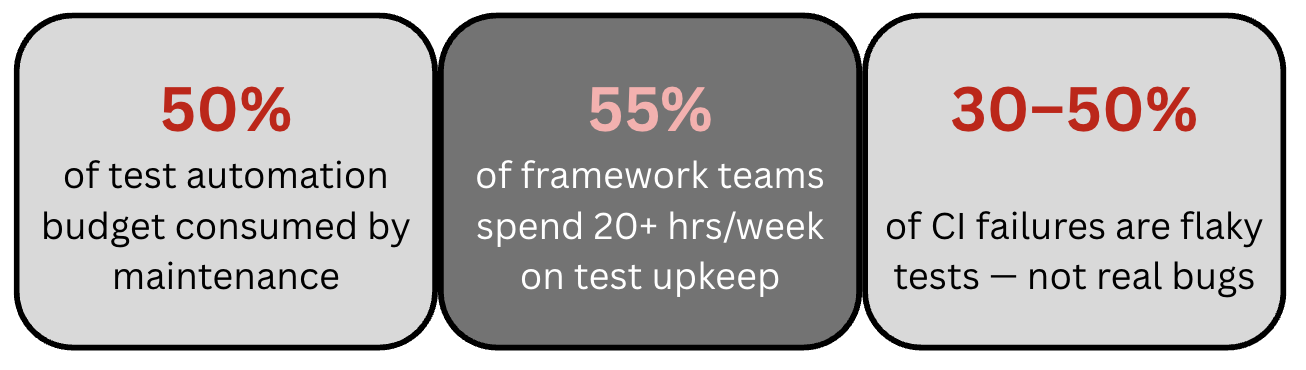

The real cost iceberg

When engineering managers assess their automation costs, they see the surface: tooling licenses, time to write tests, CI infrastructure. What sits below the waterline is far larger:

-

Locator maintenance: Every CSS selector, XPath, and data-testid is a hard dependency on the current DOM. UI refactors - even those that change nothing functionally - cascade into broken tests.

-

Framework upgrade cycles: Major functionality changes in the web pages require coordinated framework-level updates that derail automation efforts for entire sprints.

-

Flaky test investigation: Industry data shows 30-50% of CI failures in large test suites are framework noise - timing issues, race conditions, stale references. Engineers spend hours re-running pipelines to distinguish real failures from false alarms.

-

Tribal knowledge: Automation frameworks become repositories of undocumented decisions. When the engineer who built the framework leaves, understanding of the locator strategy, custom wait helpers, and environment configuration leaves with them.

-

Onboarding tax: Every new team member must spend weeks learning framework internals before contributing a single test. This cost never decreases.

-

Opportunity cost: Every engineer-hour spent on test infrastructure is an hour not spent building product. For a team with an SDET at $150K/year, even 30% on maintenance is $45,000/year in misdirected talent.

The question is not 'do we have test automation?' It is 'what is our automation producing per engineer-hour invested?' If your honest answer is 'we are mostly keeping existing tests passing rather than increasing meaningful coverage,' the model is broken - not the engineers running it.

The Copilot and MCP Trap: Faster Scripts Are Still Scripts

When GitHub Copilot went mainstream in 2022-23, the obvious hypothesis was: if generating test scripts is expensive, let AI generate them instead. When MCP (Model Context Protocol) arrived with Playwright integration, it went further - let an AI agent read the live DOM and generate tests based on actual page state. This really did feel like an aha moment. But was it?

What Copilot actually gives you for test automation

Copilot is an exceptional code completion engine. (I have used it many times for product development) It generates test code faster than I can type it. But for test automation, speed of generation is not the constraint - maintainability is.

- Copilot has no knowledge of your live application. Selectors it generates are based on statistical patterns in its training data, not your actual DOM. Generated tests frequently contain stale, wrong, or fragile locators from day one.

- Copilot does not understand your business logic. A test that looks syntactically correct can be semantically wrong - validating the wrong behaviour, missing the acceptance criteria, or testing a risk that does not exist.

- Every Copilot-generated test still requires human review, execution validation, and ongoing maintenance. You have automated the typing, not the ownership.

- Copilot does not self-heal. When your UI changes next sprint, every generated test that referenced those elements breaks - and you are back to manual maintenance, starting from AI-written code instead of human-written code.

The fundamental Copilot limitation for testing Copilot accelerates the production of test scripts. It does not change the nature of test scripts - static artifacts that break when the application changes. If your core problem is maintenance overhead, generating scripts faster amplifies the problem over time rather than solving it.

Why Building In-House Frameworks Is the Worst Investment Engineering Teams Make

Every few years, an engineering team with resources decides to build their own test automation framework. The reasoning is always the same: existing tools don't fit our stack perfectly, we want full control, and we can build something more tailored to how we work.

What actually follows is predictable enough to describe as a pattern: (I have been through this as well.)

Phase 1: The honeymoon (months 1-3)

The framework is elegant. It solves the specific pain points that motivated the project. The team is energised. Everyone wants to get their hands dirty.

Phase 2: The maintenance trap (months 4-12)

Product undergoes changes. There are new features introduced impacting already written automation, or old features being enhaced, impacting the automation. As new tests are added tests randomly start failing in CI. One of the two engineers who built the framework moves to another team. Tests written by contributors diverge in style. This is a huge pain amd no one can be blamed because every engineer has their own perception. The framework backlog grows. Someone becomes a de-facto full-time framework engineer - a role nobody hired for.

Phase 3: The legacy problem (year 2 onward)

The framework now has thousands of tests. Tests break frequently. There is too much flakiness. Migration feels impossible. The 'full control' that motivated the project now functions as a constraint. And, the product it was meant to protect ships slower because of it.

| Cost Category | Year 1 Reality | Year 2-3 Compounded |

|---|---|---|

| Framework design and build | 4-8 weeks of senior engineering time | Repeated with every major version change in the product |

| Locator strategy and upkeep | Constant from day one | Grows linearly with application scale |

| Flaky test investigation | 20+ hrs/week for 55% of teams | Gets worse as the suite grows |

| Opportunity cost (SDET) | $140K-$180K/yr total comp in US markets | 30-50% on maintenance = $50K-$90K/yr wasted |

The strategic question is simple: is building and maintaining a test automation framework a core competency your company needs? For almost every team - unless you are building a testing product - the answer is NO.

What the data says about custom frameworks Teams that moved from custom Selenium/Playwright frameworks to autonomous intent-driven platforms reported 50% reduction in overall QA cycle time, 80% lower maintenance costs, and 10x increase in automation velocity - without adding QA headcount. The framework was the bottleneck, not the engineers.

Autonomous Test Execution: The Architecture That Eliminates the Problem

Autonomous test execution is not test automation with better tooling. It is a structurally different model for how quality validation is expressed, run, and maintained over time.

From scripts to intent

In a script-based model, a test is a sequence of imperative instructions:

- navigate here,

- find this element by this selector,

- click it,

- wait for this condition,

- assert this value.

The test describes a mechanism. It knows the how, not the why.

In an intent-driven model, a test is a goal statement: 'A signed-in user should be able to complete a purchase with a saved payment method.' The autonomous execution agent receives that goal, maps it against the current state of the application, determines the mechanism itself, executes it in a real browser, and validates the outcome - without a single selector ever being written by a human.

This is not a marginal improvement in test authoring. It is the removal of the entire layer of abstraction that makes tests brittle. When the checkout button changes its ID, the intent stays valid. The mechanism adapts. No human intervention required.

Script-Based (Playwright/TypeScript)

test('checkout with saved card', async ({ page }) => {

await page.goto('/checkout');

await page.locator(selectors.username).fill("[email protected]");

await page.locator(selectors.password).fill("password");

await page.locator(selectors.loginButton).click();

await page.locator('[data-testid="saved-card"]').click();

await page.locator('button.confirm-payment').click();

await expect(

page.locator('.order-success-banner')

).toBeVisible({ timeout: 5000 });

});

// Breaks if: data-testid changes, button class

// changes, banner selector changes, or timing shifts

// Note here that, if the message needs to be validated the message needs to be build including the order id and if the positioning changes, script should be updated.

Intent-Driven (DevAssure Invisible Agent)

- summary: Validate checkout with saved card

steps:

- Sign in as a returning user.

- Navigate to checkout.

- Select the saved payment method.

- Confirm the purchase.

- Verify the order success screen.

- appears with a confirmation message.

priority: P1

tags: [promo, billing-toggle, pricing, ui]

# No selectors. No waits. No brittleness.

# UI can change - intent stays valid.

# This will also validate if the confirmation page is dynamic depending on the order id.

How autonomous agents execute tests on real browsers

When an intent-driven test is submitted to an autonomous execution agent, it follows a three-stage loop that no static script can replicate:

- Perception. The agent launches a real browser, navigates to the application, and builds a live context model - mapping interactive elements, page structure, navigation patterns, and application state. It understands the application semantically, not just as a collection of DOM nodes.

- Planning. The agent translates the test intent into an execution plan tailored to what it actually finds on-screen. Unlike a script that assumes a fixed structure, the plan is derived fresh from the current application state on every run.

- Execution and adaptation. The agent runs the plan against the live browser. If something has changed since the last run - a button moved, a field renamed, a new step added to a flow - AI Auto Heal detects the drift and updates the internal model automatically. No human diagnosis or locator fix required.

Intent vs. snapshot: the critical architectural difference

Both AI-generated Playwright scripts and autonomous execution agents interact with browsers. The difference is what they validate. A Playwright script validates that specific DOM elements exist in specific states - it checks a snapshot. An intent-driven agent validates that a goal was achieved - it checks an outcome. Snapshots break with every UI change. Outcomes are inherently resilient to it.

Self-healing at the architectural level

The single largest driver of test automation cost is locator maintenance. In a large test suite, a single design system refactor can produce hundreds of simultaneous failures, each requiring a human to diagnose and fix. This is not an edge case - it is the routine experience of any team running a mature Selenium or Playwright suite.

Self-healing in an autonomous execution agent is not a feature bolted on top of a script runner. It operates at the core of how the agent represents the application. Because the agent models application elements contextually - understanding what they do, not just where they currently live - when an element changes position or naming, the agent updates its mapping based on functional equivalence. The entire category of locator-related failures is eliminated, not reduced.

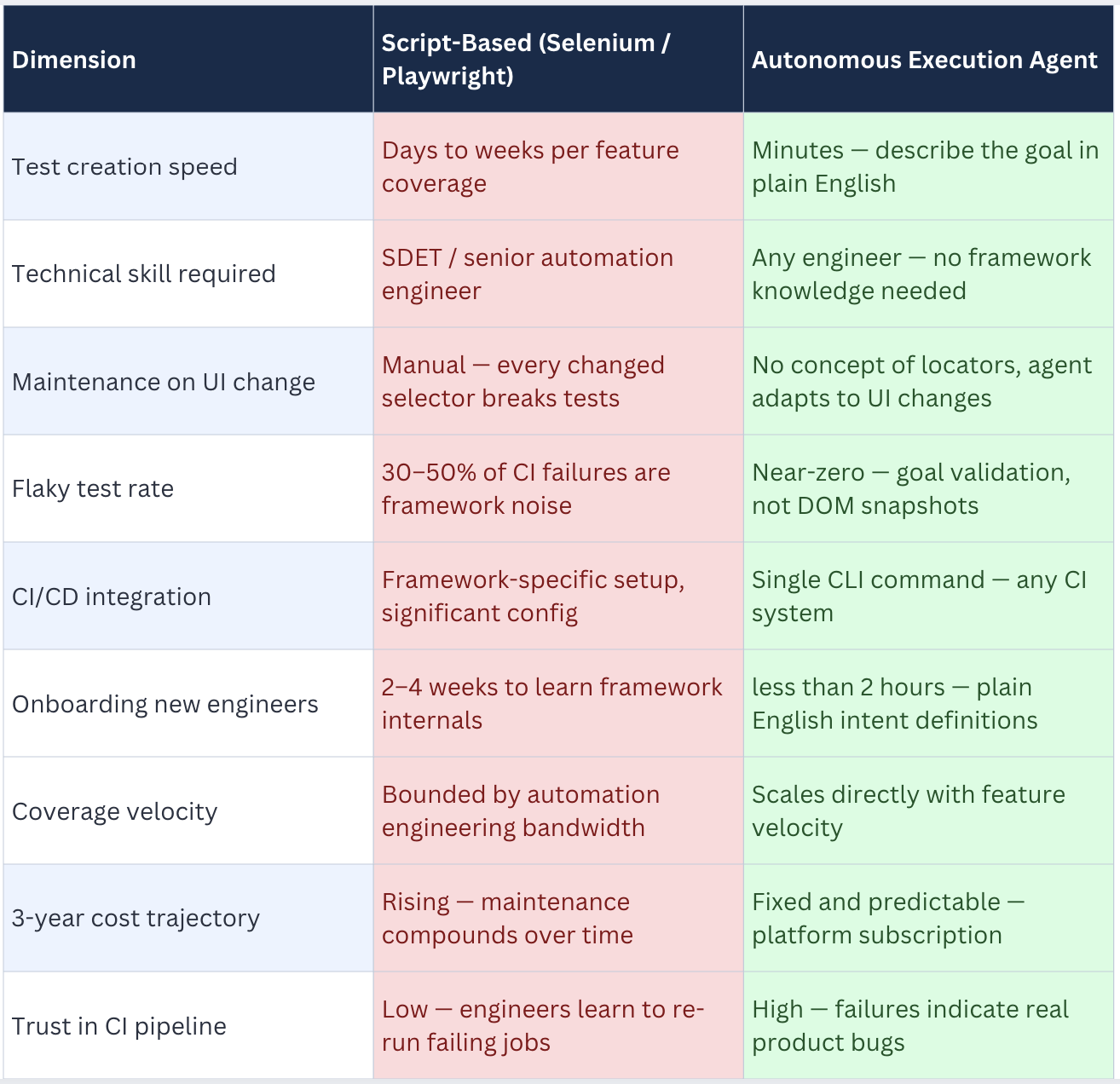

The Full Comparison: Script-Based vs. Autonomous Execution

For engineering managers evaluating a strategic shift, here is how the two models compare across the dimensions that drive team velocity and budget:

What This Means for Your Team

The shift to autonomous test execution is not a tool swap. It is an organisational change - and the second-order effects are as important as the technology.

The most immediate impact is the elimination of the class of work that drives high-calibre engineers to update their CVs: debugging flaky tests, chasing stale selectors, and maintaining a fragile infrastructure they feel responsible for but didn't choose. This work is low cognitive value and deeply demoralising for people hired to solve hard problems.

Autonomous execution redirects that capacity toward architecture, system design, edge-case analysis, and mentorship. The cognitive question shifts from "what broke in the test suite and why?" to "is the product behaviour we defined still correct?" - a vastly more interesting question.

It also changes what skills are valuable on the team. The ability to write Playwright TypeScript with robust locator strategies is no longer a hiring criterion. The ability to articulate test intent clearly - to describe what your application should do - is a skill every capable engineer already has.

The business case is clear once true costs are quantified. If a senior SDET at $160K/year spends 40% of their time maintaining existing test infrastructure rather than increasing coverage, that is $64K/year in misdirected spend on a single headcount. Multiply that across a team. Add the cost of CI pipeline noise slowing every engineer's day. Add the cost of production bugs that ship because the automation suite is too unreliable to trust.

Autonomous execution converts that spend from a rising maintenance burden to a fixed platform cost - typically less than one month of a single engineer's salary for full team access. The ROI calculation is not even close.

There is also a cultural benefit that does not appear on a spreadsheet. When the flaky test problem disappears, engineering culture changes. Developers trust the CI pipeline again. Quality gates become meaningful again. Deployments stop feeling like a minefield.

For the engineering culture overall

The most underrated consequence of removing the script-writing model is what it does to ownership of quality. When writing a test means describing a user goal in a sentence - rather than 40 lines of Playwright boilerplate - developers actually write tests. The friction is low enough that quality becomes genuinely embedded in the development workflow rather than delegated to a separate QA function.

Teams that adopt autonomous execution consistently report coverage increases driven not by QA mandates but by developers who found testing fast enough to just do. That is the shift-left outcome that test automation has always promised and rarely delivered.

DevAssure's Invisible Agent: Autonomous Execution in Practice

DevAssure's Invisible Agent is purpose-built for the model this blog describes - intent-driven, scriptless, fully autonomous test execution against real browsers, APIs, and mobile applications. It is not a Selenium wrapper or a smarter Playwright abstraction. It is an execution layer that makes test scripts architecturally unnecessary.

How it works

The Invisible Agent receives test scenarios expressed in plain English. No code. No selectors. No framework configuration. A typical definition looks like this:

- steps:

- Add a few items to the cart

- Go to the cart page

- Modify the quantities for some items

- Validate the necerray changes are reflected on the UI in terms of the price.

- Complete the checkout using valid data.

- Validate.

priority: P1

Each test step is a statement of intent - what a user should be able to do. DevAssure's Invisible Agent handles everything else: element identification, interaction sequencing, wait logic, assertion generation, and adaptation when the application changes.

Key capabilities

- Scriptless execution on real browsers

- When the application changes, the agent detects drift and updates its internal model automatically. No locator failures, no manual fixes. No need for "Self Heal"

- VS Code and Cursor extension: The Invisible Agent runs natively inside the editor. Write intent, run tests, see inline results without leaving the IDE.

- Zero maintenance overhead: No selector libraries, no WebDriver versions to manage, no flaky retry configurations, no framework upgrade sprints.

Frequently Asked Questions (FAQs)

Conclusion - The Right Question Has Changed

For the last decade, the question teams asked was What is the best framework for test automation? Selenium or Playwright. Cypress or TestCafe. Java or TypeScript. These are real decisions with real trade-offs, and generations of skilled engineers have thought carefully about them.

But the right question NOW is different. It is: WHY ARE WE WRITING TEST SCRIPTS AT ALL?

If your answer is 'because that is how test automation works,' it is worth examining whether that belief predates the existence of systems that can execute quality validation intent-driven and autonomously. If your answer is 'because we need to own the execution layer,' it is worth calculating what that ownership is actually costing you in engineer-hours, CI pipeline noise, and slowed delivery velocity.

Test scripts were an excellent solution to a 2010 problem. Modern applications that deploy continuously, evolve rapidly, and run across diverse environments have outgrown that solution. The teams that recognise this earliest will gain a compounding advantage in delivery velocity, quality confidence, and talent retention - because nobody wants to spend their career maintaining selectors.

Autonomous execution is not a theoretical alternative. It is running in production at many companies. The infrastructure exists. The economics are clear. The only question is how long your team continues writing scripts.

🚀 See how DevAssure accelerates test automation, improves coverage, and reduces QA effort.

Ready to transform your testing process?