Why Personas Matter in Testing

Software testing traditionally focuses on one core question:

Does the application work?

But there is a more important question that often gets ignored:

Does the application work well for the people who actually use it?

Functionality testing ensures that features behave correctly. But real-world applications are used by humans with different abilities, expectations, and behaviors. If testing ignores the human side, teams risk building software that technically works but fails users.

This is where personas in testing become critical.

Testing is not just about validating features — it's about validating experiences.

The Missing Layer in Most Testing Strategies

Most teams test applications like this:

- Verify functionality

- Check API responses

- Validate edge cases

- Ensure regressions don’t break existing features

These are all necessary.

But something important is missing:

User perspective.

Developers and QA engineers are not representative users. They understand workflows, know where buttons are, and intuitively interpret UI patterns.

Real users don’t.

This difference becomes even more pronounced when the application targets a specific audience, such as:

- Kids

- Elderly users

- Non-technical users

- First-time users

- Users with accessibility needs

Testing without considering these personas can easily lead to poor usability.

A Real Example: A Website Designed for Kids

Recently, I came across an educational website targeted at children aged 6–12 years.

The content was excellent. The intention behind the platform was great.

But the experience told a different story.

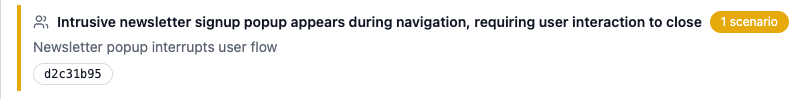

Issue #1: Newsletter Popups for Kids

After landing on the page, a newsletter popup appears within a few seconds.

For adults, this is normal.

But for children?

- Newsletters are irrelevant

- Many kids might not understand what it is

- Some may not know how to close the popup

Instead of engaging the user, the first interaction becomes confusing or frustrating.

This is a classic example of designing for business metrics instead of user personas.

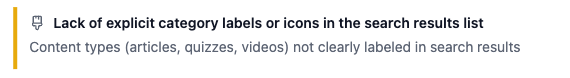

Issue #2: Non-Intuitive Actions

Another issue was how actions were presented on the interface.

Some actions were:

- Hidden and visible only on hover

- Represented by icons without labels

These are common UI patterns for desktop users.

But kids often:

- Don’t explore hover states

- Don’t recognize abstract icons

- Prefer clear visual actions

As a result, many interactions were simply never discovered.

The Hidden Cost of Features Nobody Uses

In this case, roughly 30% of the available actions were never used.

This raises an important question:

Why spend time building features that your users don’t even discover?

Engineering time is expensive.

Feature complexity increases:

- UI clutter

- Testing scope

- Maintenance cost

- Cognitive load on users

Often the best UX improvement is removing unnecessary functionality.

But this insight only emerges when testing is done through the lens of user personas.

The Problem With Internal Testing

A common response from development teams is:

"The UX looks good. Everything seems easy to use."

Of course it does.

Developers:

- Know the product

- Understand workflows

- Expect certain patterns

But developers are not the target users.

This is why usability testing often requires observing real users interacting with the product.

Unfortunately, most teams rarely do this at scale.

Accessibility Has Frameworks. Usability Doesn't.

Accessibility testing has strong frameworks:

- WCAG guidelines

- Automated accessibility scanners

- Screen reader validation

- Contrast checking tools

But usability testing is harder.

There are no universal tools that can automatically validate:

- Whether an interface is intuitive

- Whether actions are discoverable

- Whether a user understands the workflow

Usability depends on real-world behavior, making it harder to standardize.

AI Testing Agents Need Personas Too

AI agents are increasingly used for testing applications.

But most AI-driven testing focuses on:

- Executing functional steps

- Validating responses

- Checking UI states

This still misses something important.

AI agents often behave like perfect testers.

They:

- Click everything

- Discover hidden features

- Navigate flows optimally

Real users don't behave like this.

A child, a new user, or a non-technical user interacts with software very differently.

To truly test usability, AI agents must simulate personas.

They need to answer questions like:

- Would this user discover this feature?

- Would they understand this icon?

- Would this popup confuse them?

- Would they know what to do next?

Testing needs to become behavior-aware, not just function-aware.

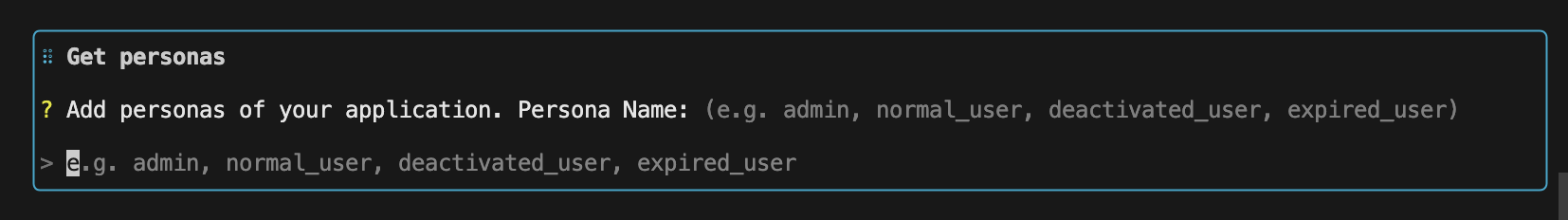

Persona-Driven Testing in DevAssure

This is exactly why we built persona definitions into DevAssure Invisible QA Agent.

During setup, one of the steps asks users to:

Define your personas

Many users initially ask:

"Why do we need this? I don’t see where personas are used in my tests."

That’s because personas work behind the scenes.

They fundamentally change how the AI agent behaves during testing.

Instead of acting like a generic automation script, the agent adapts its behavior based on the defined persona.

Example personas.yaml file in DevAssure

user:

description: Kids who want to learn new and exiciting things

age: 8-12

technical_familiarity: low

device: tablet

behavior:

- limited exploration

- prefers visual cues

- avoids complex interactions

admin:

description: Admin user who can manage the content and take actions on conflicting content

technical_familiarity: high

device: desktop